The history of misinformation is the history of communication.

The invention and proliferation of new communication technologies, from the printing press to the Internet, have all changed the information landscape and the course of human history. Arguably, both the biggest atrocities and important scientific and societal advancements might not have happened without our ability to communicate at scale.

Should we worry about AI flooding the information ecosystem with false or misleading information, potentially upending democratic society?

In this essay, I’m going to explore questions like:

How bad is misinformation really?

Is it a viral “infodemic” that will consume people’s sanity and destroy democracies, or just a moral panic?

Are most people gullible and misinformed?

Does technology make misinformation worse?

Are smart people less likely to believe misinformation?

What is misinformation?

Misinformation doesn’t only refer to false information. These days, most experts define it as misleading, incomplete or incorrect information. As a result, a relatively small part of all misinformation is strictly false; the vast majority of it is true or true-ish but misleading, incomplete, selective or deceptive. Disinformation, on the other hand, can also be true or false but is created and transmitted purposefully to change beliefs or public opinion; propaganda is disinformation spread by governments for political purposes. Some also talk about the term malinformation, which is misinformation meant to cause harm, but I personally don’t find that it adds much to our discussion so I won’t use it. Throughout this article, I’ll mostly be using the word “misinformation” to refer to both mis- and disinformation, depending on the context.

The misinformation problem

Governments and mainstream institutions around the world have desperately been grappling with misinformation since the 2016 US elections, where fake news and Russian disinformation campaigns were thought to have swayed the results in favor of Trump.1 Recently, the World Economic Forum declared misinformation and disinformation the biggest risks facing the world during the next two years, ahead of other problems like extreme weather events, social polarization, and interstate armed conflict.

If dealing with misinformation was just about fact-checking, it probably wouldn’t be seen as a civilizational infodemic. However, because any content that omits some fact could be misleading with respect to the whole truth of the matter, both fringe and mainstream channels can be sources of misinformation or disinformation. Much content is not false but purposefully or inadvertently leads the viewer/reader to draw the “wrong” conclusions.

In this view, misinformation is everywhere and spreads through the Internet and society like a virus, infecting people with all kinds of misperceptions and false beliefs.

With this framing, misinformation researchers like Sander Van Der Linden and others have sought to study how society can reduce the spread of misinformation or its impact. In his book Foolproof, he describes various methods and research that aim to protect individuals against misinformation. He likens misinformation to a virus that we can inoculate people against by giving them a “vaccine,” which involves educating individuals about the fingerprints or markers of misinformation and applying techniques like “prebunking” (as opposed to debunking).

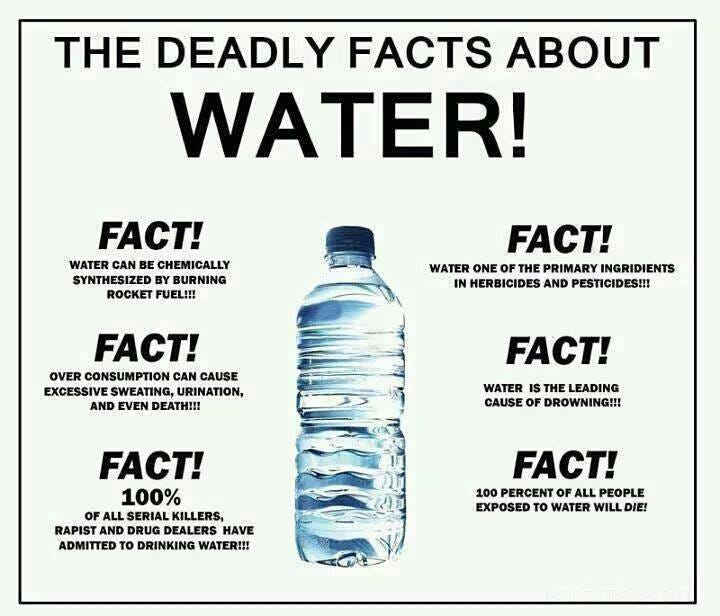

The fingerprints of misinformation

Misinformation is thought to have certain characteristics (“fingerprints”) that are less common in high-quality information and signal its lack of reliability:

Strong Emotional Appeal: Misinformation often uses emotional and moralistic language to provoke strong reactions.

Clickbait/Sensationalist Headlines: Exaggerated, divisive or sensational headlines that make unsupported claims.

Dubious Sources/Fake URLs: Sources known to spread fake news or websites using URLs that mimic reputable news websites.

Absence of Authorship or Date: A lack of transparency about who authored the content or when it was published.

Overtly Partisan Content: Blaming the “other side” or casting some group or person as a villain.

Flagrant use of Logical Fallacies: Misleading content often relies on flawed arguments or reasoning, such as ad hominem attacks or straw men.

Manipulated or Decontextualized Images and Videos: Highly edited or out-of-context content used to support false or misleading narratives.

Promotion of Conspiracy Theories: Content promoting grand conspiracy theories that lack evidence and contradict established knowledge (defending its lack of evidence with claims that the "truth" has been suppressed by mainstream media or governments.)2

The purpose of these fingerprints is to act as heuristics for spotting misinformation. Content with these markers may not be false but is more likely to be misinformation. Put differently, if you were to bet on whether a randomly selected piece of content is misinformation based on its style/context/source, you would be right more than 50% of the time.

There’s some recent research that suggests that fingerprints can help identify misinformation. For example, Van Der Linden has conducted experiments to test if people can be inoculated against the misinformation “virus” by learning about it and its fingerprints. In several of the experiments, participants play a game where they first get inoculated with a “weakened dose” of the virus by creating misinformation themselves and then practice spotting it. In the experiment, most participants got better at discerning between misinformation and “true” information after playing. Still, this research is not without its critics.

Do we need a vaccine for misinformation?

The declaration by the WEF that misinformation is the biggest global risk has been met with a lot of criticism by commentators and experts alike. Critics argue that it's an excuse for governments to dismiss or censor ideas they don’t like and that political bias undermines the scientific study of misinformation more broadly. The fears about an impending misinformation apocalypse are overblown compared to the amount of coverage it receives in the media.

Misinformation about misinformation

The philosopher Dan Williams, among others, has outlined in great detail some of the reasons to doubt that misinformation is anything like a virus and questioned misinformation research viability as a scientific endeavor.

To begin with, the sort of studies mentioned above seem to just make participants rate most content as false or misleading. Rather than making them more accurate, they end up being more skeptical of all content across the board - which isn’t always a good thing.

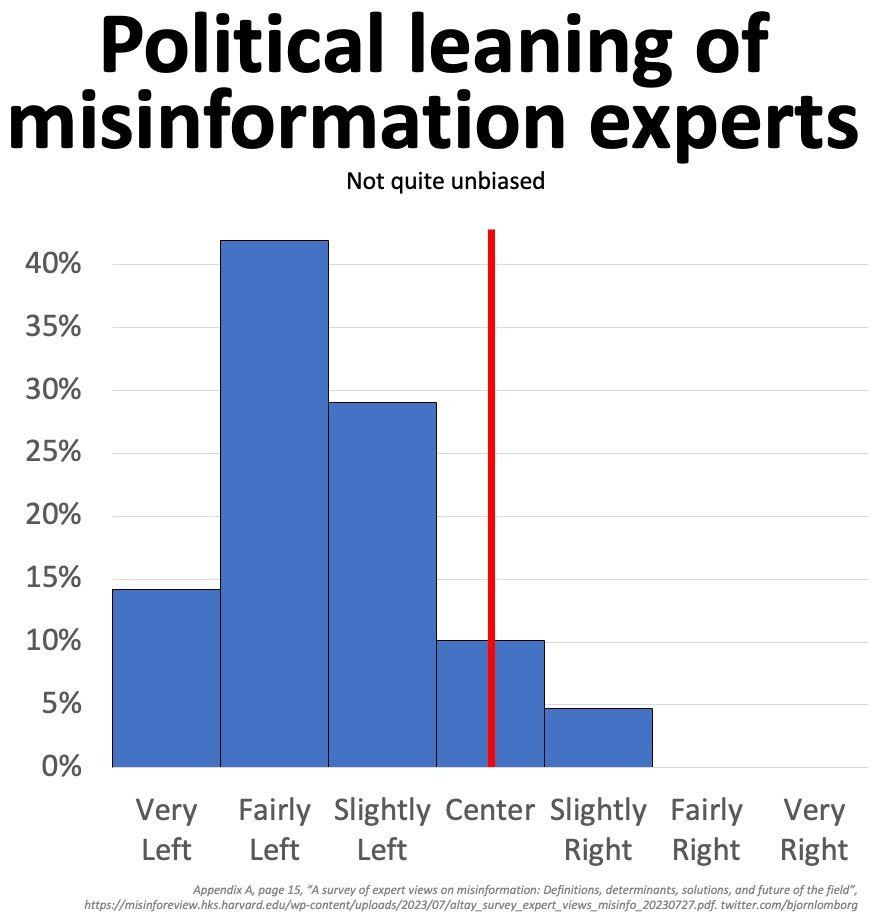

Second, with a broad enough definition, the demarcation line between misinformation and information is vague and subjective. Ideological bias creeps in when researchers select misinformation examples, and there's an acknowledged left-leaning political direction among experts:

This isn’t a postmodernist critique where the truth is purely subjective; climate change is real, vaccines are generally safe and effective, and the moon landing did really happen. But when almost anything can be misinformation, "experts" and institutions can easily dismiss certain sources, mediums or types of information they don't like as such. This doesn’t have to be a result of a massive elite conspiracy to withhold the truth from the public, just a result of groupthink, political bias and/or paternalism. If misinformation is largely a matter of what the bourgeoisie recognizes as such, the credibility and usefulness of their efforts are undermined, making it harder to reach the people they most need to persuade. Their efforts end up being seen as a form of propaganda itself.

Third, false or fabricated misinformation is generally easy to spot and, according to research, quite rare. This makes sense because even though some people will adopt and spread extremely crazy ideas (e.g., the flat earth theory and QAnon), the majority dismisses them over time, and they don’t gain a strong foothold among the general public. Contrary to what most would assume, myself included, beliefs in conspiracy theories have not increased over the past decades despite the rise of digital and social media.

Why does it seem like misinformation is so prevalent? Due to the Internet and social media, a lot of fringe ideas, conspiracy theories, and noise have percolated up the surface of public consciousness. Ideas that used to remain hidden from the public discourse. There’s just more information overall.

Moreover, modern news reporting is actually more accurate and covers a broader range of topics, better reflecting the complexities of the world. In this way, people are more informed today rather than less. Yet, it also contributes to a more fragmented, messy picture of the world. One that is more honest but that feels worse compared to the “good old days” (that never existed).

Fourth, there’s a difference between someone seeing a video on YouTube or Instagram, reading an article, or even sharing it on social media and actually believing the claims or taking real-world actions (like protesting or not taking a vaccine). So, while it’s true that much of what is shared on social media is highly engaging, surprising, inflammatory, or moralistic - a lot of which can probably be fairly categorized as misinformation - it doesn’t mean people believe everything or take it that seriously. We should probably see much of that content as entertainment rather than as part of forming accurate opinions.

Finally, it’s the misinformation with a lack of obvious fingerprints but that is still untrue, deceptive or incomplete that should worry us the most, especially when it's from credible, mainstream news outlets or institutions. What’s more, in some cases, misinformation may exhibit the opposite traits, such as a complete lack of emotive language, giving it a false veneer of professionalism and authenticity.

Why do people fall for misinformation?

To ask this question is to assume that people are essentially biased and gullible, susceptible to populistic, emotional and polarizing messages, in need of a "misinformation vaccine,” as it were. From this perspective, misinformation is a big problem because any "bad" idea that people come across will instantly infect them with its misleading or false nature. The solution, presumably, is to prevent people from getting exposed to bad ideas and ensure they only come in contact with good ones.

Another unstated assumption is that misinformation happens to other people, that the general public is passive and completely open-minded, and is easily manipulated by evil forces (typically foreign governments, right-wing populists or Internet trolls).

But this picture is wrong or at least incomplete. First, the public is as much a part of disseminating misinformation as they are recipients. Some people are much bigger spreaders than most, but they aren’t just pushing false narratives on an unsuspecting audience. Rather, they share it because there exists a market for such narratives. In this way, misinformation is "pulled" from the creators or spreaders by their audience. Misinformation (and media broadly) is demand-driven.

Second, people are less persuadable than you’d think. It's true that we are very biased in many ways; there are hundreds of known cognitive biases. But these heuristics have evolved for a reason, and they don't make us completely irrational or gullible.

Take confirmation bias, the Rolls Royce of biases. It's the tendency for people to believe or to adopt new ideas or information when it comports with their existing beliefs. It's a big reason people refuse to adopt correct beliefs, but it also prevents them from buying into dubious ideas they come across. This may be why misinformation doesn't reliably change people's beliefs. To be sure, this kind of epistemic stubbornness can be quite bad, too - we want people to believe true things - but it doesn't point to the prevalence of misinformation itself as the main cause of widespread misperceptions.

Causality

Does misinformation by itself cause people to adopt (false) beliefs, or do people already have them, with misinformation serving as a form of post-hoc justification? If it is purely a symptom of a deeper pathology like institutional distrust, paranoia, or a given group identity, it would mean that a complete absence of misinformation wouldn’t make any difference to what people believe. But this seems implausible. In a counterfactual world with no misinformation, how would false beliefs arise?

For example, why are so many people hesitant about vaccines? Perhaps it's natural to feel that injecting yourself with a disease to avoid a disease feels icky and wrong. Yet information about unlikely side effects or the idea that vaccines cause autism (which is false) are specific ideas that people need to be exposed to, and that can influence their views and willingness to get vaccinated.

That said, as Dan Williams has argued, misinformation by itself is probably not the main problem. It's more of a symptom than a cause:

[…] although some experimental evidence suggests that people can be persuaded to abandon false beliefs, such interventions rarely cause people to change more basic attitudes, such as voting or vaccination intentions, suggesting that consuming misinformation often serves to rationalise pre-existing inclinations rather than cause them.

[…] research exploring what happens when the supply of misinformation is restricted suggests that it often does not reduce people’s overall engagement with it. For example, when misinformation on Facebook was reduced during an accidental outage, people simply searched for misinformation elsewhere, and Meta’s intense efforts to censor anti-vaccine content during the Covid-19 pandemic did not reduce engagement with such content on the platform, precisely because people circumvented the censorship and sought it out in other ways.

As a result, I think there’s a causal link between a person’s prior susceptibility to, exposure to, and adoption of new information. When people are unfamiliar with a topic, new information has a bigger impact. People are more likely to accept and spread information that aligns with their worldviews, psychology, ingroup identities, or otherwise confers social status.

Epistemic vigilance

Contrary to the popular notion that most people are gullible, a growing body of research suggests that we have evolved a form of "open vigilance" toward new information. For example, in his research on evolutionary and cognitive psychology, Hugo Mercier and his colleagues have shown that people have evolved a set of sophisticated cognitive tools that help us balance openness with skepticism. For every person who falls for a scam or guru, a thousand people don't buy it.

People can be rational and evaluate new information honestly when there are clear incentives to form accurate beliefs, yet tend to be vigilant against outgroup beliefs. Because of this epistemic vigilance, they can change their minds in the presence of good arguments, especially from trusted sources, but aren’t easily persuaded in general. According to Mercier and his colleagues, this is why state propaganda doesn't work very well and fails to persuade most people who don’t already agree with it.

A good, rational argument presents information in a series of logical steps. It may not always change minds entirely, but it can adjust their beliefs in the direction of the argument. The flip side of this is that good arguments don’t have to be correct. You can construct good arguments for bad or false ideas that, given the right context and the right recipients, will land in just the right way.

When an argument manages to make contact with our epistemic machinery in just the right way, it’s almost as if we can’t help but be persuaded by it. For example, if you understand the rules of arithmetic and someone convinces you about the correctness of the statement 2+2=4, it will be impossible to believe otherwise.

Unfortunately, few things of great importance are like arithmetic or trivial logic puzzles. Instead, worldly and consequential issues are endlessly complex, mired with aspects that protrude into people's identities directly or indirectly. This makes it very hard for them to parse objectively, even when presented with good arguments. The complexity of most real-world problems also means that the right answer is almost always hard to get at, and experts will have fair disagreements. Scientific consensus is often a decent short-hand for the truth, but it is not “The Truth.”

Contestation of truth claims among experts does not mean that we know nothing or that anyone’s version of reality is just as good as anyone else’s version. It does mean that truth often sits in abeyance, meaning that there are typically and simultaneously multiple valid truth claims — contested certainties as Steve Rayner used to call them. Such excess of objectivity unavoidably provides fertile ground for cherry-picking and building a case for vastly different perspectives and policy perspectives.

- Political Scientist Roger Pielke Jr. on the role of experts and truth.

We will readily lean on the scientific consensus when it comports with our prior beliefs or dismiss it in favor of rouge truth-telling scientists when it doesn’t. So, our problem is motivated reasoning rather than gullibility. This kind of reasoning can both shield us from false or potentially misleading information and make us more susceptible depending on our prior motivations.

This paints a picture of people as neither overly gullible nor as hopelessly close-minded. If anything, our problem is one of epistemic stubbornness.

Misinformation isn’t a problem of stupidity

There's also the question of people's intelligence or cognitive abilities. To what extent does higher intelligence protect against misinformation, scams, or conspiracy theories? It makes intuitive sense that smart people can process information more accurately than dumber people, and research shows that better cognitive abilities do help in these respects. On the contrary, however, because smart people are better at reasoning, they are also at risk of arguing for or defending beliefs that comport with their identities and prior beliefs - some of which may be false or misguided. In other words, while stupid people may be more easily persuaded by others, smarter people may be more easily persuaded by themselves (and the easiest person to fool is yourself.)

The marketplace of rationalizations

How you think we should deal with misinformation has a lot to do with how you view the power of ideas and their ability to spread among people.

The best ideas are assumed to prevail over time through open and honest debate, which is something I assumed as well. The naïve view of rationality is that we (can) assess information objectively as neutral agents. Unfortunately, the extent to which we adopt or hold on to ideas is modulated by our motivations, identities, culture, etc.

We rationalize our behavior and beliefs rather than being objectively rational. We are good at finding reasons for our initial intuitions rather than looking at an issue from a more neutral point of view. This is not always irrational, but will invariably make us fail to accept certain important ideas or pieces of information.

Evolutionarily speaking, the function of reasoning is to argue with others. Rationality isn't achieved individually but socially. That's why the idea of a marketplace of ideas is actually more like a marketplace of rationalizations. There is a supply of and a demand for (mis)information, a marketplace where people “buy” and “sell” ideas not based on truth-seeking motives but for acquiring status within their in-group (and sometimes for financial gain). More than they want the truth, people want to discover reasons (rationalizations) for their beliefs. They “shop around” the marketplace, with sellers more than happy to sell them reasons they find compelling.

Perhaps better and true ideas do prevail over worse ones over the long term, but it’s never a straight line.

The role of experts and institutions

For all its faults and biases, the mainstream media rarely lies, and government institutions in democratic developed nations are generally honest and useful. When the media spreads misinformation, it’s often a result of good intentions, bias or (unintentional) omission of certain facts.3

Nevertheless, most influential elites, academia, and mainstream media have a liberal or left-leaning bias, making the classification of misinformation potentially value-laden or biased. That doesn’t mean they're completely wrong about what is or isn’t misinformation. But it does mean that attempts to address it and spread important true information to the public risks becoming a partisan battleground.

Worse still, in their attempts to address what they see as misinformation, both the mainstream media and governments often try to silence, censor, cancel, or discredit dissenting or opposing viewpoints. This might not be as harsh as outright banning certain forms of speech. It can be done by officials labeling dissenting or non-mainstream opinions as misinformation. Or it can result from elites and mainstream culture narrowing the Overton window of permissible opinions and speech.

Both of these hard and soft kinds of censorship tend to have the opposite effect of what is intended. Restricting fringe opinions will sow more distrust of the elites and their institutions, leading people to seek alternate sources of information and potentially adopt erroneous beliefs. The conspiratorial mind will readily see such flagrant displays of power as proof of whatever conspiracy they already suspected to be true.

So, the way to deal with misinformation—to the extent that it is false or truly misleading—is not censorship, shaming or quieting non-mainstream views and information. It’a to foster trust in institutions and the scientific process, not censorship or shaming. Some more humility in public messaging and seeing the public as participants rather than as untrustworthy people who must be told what to do would help.

During the COVID-19 pandemic, Western governments and public health agencies struggled with health recommendations and policies. Governments had a major challenge in how to communicate the need for people to get vaccinated due to the nature of how vaccines work and the need to create herd immunity within the population. There was already a lot of vaccine skepticism present, and with the quick development of mRNA vaccines, fears of side effects grew quickly. When information about side effects within certain sub-populations started getting out, vaccine deniers and skeptics jumped on the opportunity to discredit the vaccines or cast doubt on their efficacy. In response, many social media platforms started issuing warnings whenever vaccines were discussed in a negative light. This probably had little effect on the spread of misinformation - to the extent that it actually was misinformation - and, if anything, just exacerbated the anti-institution sentiments of some parts of society.4

Nevertheless, the people who strongly distrust mainstream institutions and media make the mistake of assuming nefarious intent on what is essentially just an overly paternalistic but largely well-meaning effort to "protect” people from certain forms of information.

(The caveat to this is that it mostly applies to developed, rich countries. It's possible that false information can spread more easily in lower-income or developing countries, probably due to low levels of education, the higher prevalence of pre-industrial belief systems and so on. On the other hand, it’s not irrational to believe in conspiracies and distrust the government in places where it often is corrupt and unreliable.)

Technology and (mis)information

New information technologies have always had a profound impact on how we consume information and communicate with each other. This includes everything from the evolution of language itself, then writing, the printing press, radio & TV, and of course, the Internet. Technology can, therefore, help spread both true and false information and everything in between. So it seems a foregone conclusion that AI, too, will make misinformation worse - much worse.

We can readily imagine how Large Language Models (LLM) and other Generative AI models can be used to create accurate, convincing and high-quality misinformation or disinformation at an unprecedented scale. As these AI models improve, they might produce content indistinguishable from human-created content - in some cases, we're already there. Not only that, but they will be superhuman in their abilities to persuade us in a way that “regular” misinformation or fraudsters generally can’t. So much for epistemic vigilance!

AI and automated content creation could possibly worsen the problem of poor-quality information online. For example, it’s assumed that a rather large share of accounts on X (Twitter) are generated by bots that create or spread misleading or fake content. And the problem could potentially get worse. But communication is a two-way process. It's not enough to transmit some information; the recipient must also understand it, accept it and believe it.

Moreover, it’s unlikely that the small percentage of people who engage with the lion’s share of misinformation will increase due to an explosion of AI-based misinformation. We have limited time and attention; we can't consume much more content than we already do because the amount of human-created content already takes up most of our bandwidth.

There’s also the widely accepted idea that social media has created filter bubbles that are entrenching people in very narrow and skewed information environments. But despite all the time many people spend online, this problem seems overstated since the vast majority of people get information from a variety of sources, including mainstream media.

Nevertheless, one might still contend that with AI, it’s going to be different because AIs are capable of advanced forms of reasoning, personalized communication, and persuasion. But this seems unlikely. We already live in a world where the cost of creating high-quality content is very low. It’s been possible to fabricate text and images with ease for decades. The tools available to fraudsters are accessible to legitimate journalists, so the quality of credible news and content would increase as well.

I also doubt that it’s possible to create content that’s orders of magnitude more persuasive than “regular” misinformation already is (or isn’t). Not because I doubt AI models will improve but because the impact of more persuasive content would have diminishing returns; there’s a practical limit to how easily people can be persuaded.

What matters more than the persuasiveness of the content is the content itself and how that makes contact with your prior beliefs and your identity. Short of surgical or chemical manipulation of our brains, an order of magnitude better persuasion skills won't change the information itself.5

Now, all this doesn’t mean that manipulated or fabricated content like deep fakes is not a problem at all or that no one will ever fall for it. Today, deep fakes are nearly indistinguishable from real content. Fraudsters and scammers have already leveraged chatbots that can engage in realistic conversations (whether in text or voice) to impersonate real people, obtain personal information, trick them into sending money and so on. Many fear it can get a lot worse. But society has adapted both technically and socially to all kinds of communication technologies that were at some point used to scam people more easily.

Governments also worry that the proliferation of AI-generated misinformation might reduce people’s trust in them. But as the political scientist and blogger Richard Hanania points out, rather than disempowering governments, AI and deep fake tech might make them more powerful:

“…cheap and easily available deepfakes will cause most people to adopt a reasonable prior of “everything I see or hear on the internet is fake, unless it comes from a credible news source.” That’s already the standard for text, so there’s no reason it can’t also apply to audio and video.”

The point is that if anything can be fake, then you can only trust information based on the context or the sender. And to the extent that governments, whether democratic or authoritarian, are trusted as "authorities" by most people, it's possible that we will just trust information from far fewer sources.

Conclusions

For as long as I can remember, I have been equally intrigued and perturbed by the reliability with which we misperceive and misjudge reality. The commonness of conspiratorial, magical thinking and pre-industrial, anti-scientific beliefs.

I approached this topic wanting to figure out the best ways to prevent people from adopting bad ideas, how to change people’s minds and stop the spread of misinformation. Ironically, the more I read about the problem, the less worried I became about it in general and the more I started wondering about the ways that I may be misinformed myself.

I now see the production and consumption of false or misleading information as a multi-causal phenomenon driven by a marketplace of rationalizations. It's not mainly a problem of technology or gullibility. It's a problem of institutional distrust and motivated reasoning shaping the information landscape.

In this marketplace, the consumption of misinformation looks more like a symptom of underlying issues and beliefs than the cause of them. It’s also true, however, that certain ideas and information can’t spontaneously arise in people's minds without prior exposure. In this way, misinformation is part of a causal chain or cycle that perpetuates false or misleading ideas depending on individuals' predisposition to seek out or accept such information.

Therefore, the focus on preventing the spread of misinformation, in the narrow sense of false or low-quality information like conspiracy theories, is largely misguided. Misinformation in the broader sense, especially high-quality information crafted and communicated by political or cultural elites, that includes true but misleading, cherry-picked, or exaggerated content, seems like a much more pernicious problem. When people and institutions most of us trust spread information or narratives that are even slightly false (or silence inconvenient truths), it can have a much bigger negative impact than fringe ideas ever have.

This is why the idea of educating people about the fingerprints of misinformation and expecting it to work like a vaccine isn't a very effective solution. It’s ineffective for the same reason that just promoting "critical thinking" as a means of making people less gullible doesn’t do much. To really know whether a claim is true, half-true or false, you need to know facts about the matter at hand. It might help on the margin to have some critical thinking tools or be familiar with common persuasion techniques used by charlatans. But if you know almost nothing about a subject, it’s very hard to avoid being fooled. Generalities and surface-level fingerprints that only address the “symptoms” won’t cure the disease.

Is misinformation research a waste of time? It’s good that academics experiment with interventions for improving critical thinking and study how people consume information and form beliefs. However, because the categorization of (mis)information by academics is inevitably biased or selective, it may reduce the broad applicability of the research. In addition, since a small share of people consume the majority of “hardcore” misinformation, focusing on preventing its consumption will have limited effects overall.

As most people are already skeptical of sources they don’t trust, it may be more fruitful to focus on improving trust in reliable sources rather than just making people indiscriminately skeptical. To improve trust, the first step must be to acknowledge the primacy of truth, the need to have honest conversations about complex ideas, and avoid the instinct to silence or censor fringe ideas (socially and politically).

We should do our best to promote the importance of upward mobility and economic growth, as improving standards of living and the ability for people to “determine” the course of their lives can reduce beliefs in conspiracy theories and make them less likely to turn against society.

Misinformation is a problem to take seriously, and many people are misinformed or confused about a whole host of important things. But misinformation itself is not the only or main reason for that, and it isn’t a world-ending virus that will upend free and democratic society.

Fake news became a widely recognized phenomenon after the 2016 US elections and was believed by many to have swayed the election in favor of Donald Trump. But its effects are most likely negligible. At least in the US, literal fake news has tended to be more right-wing or conservative, and the people that consume (and presumably believe) such fake news are typically not undecided or center voters but very right-wing. The vast majority (of the minority) that consume fake news, therefore, have already decided what they will vote for, and so election results aren't impacted by that kind of mis- or disinformation.

Content promoting conspiracy theories tend to employ a set of very recognizable techniques (fingerprints). It is instructive to see these techniques in action when you know for a fact that there is no conspiracy:

A good example is the often poor communication of science and technical news. As information passes from the academic press to journalists, there are often unconscious (but biased) editorial simplifications or omissions of facts.

I should say that I think the evidence shows that the COVID-19 vaccines work quite well and that the benefits of taking the vaccine, as well as the boosters, far outweigh the risks for most people. Though there certainly are a lot of crackpots out there, it's not crazy to worry about side effects. In an effort to get as many people as possible to take the vaccines, public health officials understandably sought to downplay the risks (known or unknown) of taking vaccines. This probably made things worse. A different approach would have been to speak more honestly about what was known and what was unknown and clearly state that, although there are some risks, the benefits far outweigh the negatives. When some side effects are shown or when policy mistakes are made, the skeptics and crackpots are only emboldened even more. Epistemic humility, explaining the reasoning of your decisions and communicating openly are better ways of gaining people's trust than pretending to be certain, especially if you turn out to make mistakes.

To the extent that AIs can be used to persuade people of misinformation, they can also be used to try to rid people of belief in conspiracy theories. A recent study found that by chatting with an LLM, people who believe in a conspiracy theory can reduce their belief in that idea by 20% on average. This is not an insignificant finding if it’s applicable on a much broader scale. And if we take the reverse as true as well, LLMs could persuade people who don’t believe in a conspiracy theory to think it’s 20% more likely than they did before. Either way, this doesn’t entail a world-ending infodemic brought about by AI-generated misinformation.